The Problem

A critical part of any production AI system is the ability to find relevant, accurate information to feed into LLMs. Get the retrieval wrong and the LLM hallucinates - no matter how good the model is.

Better retrieval means the LLM gets the right context on the first try - less work, faster responses, fewer tokens wasted.

What Was Built

RakanEmbed was trained on a single RTX 3060 Ti using Unsloth's embedding fine-tuning pipeline. A 4B parameter retrieval model that feeds LLMs the right context - reducing hallucination, cutting token usage, and speeding up responses. It connects ideas across different framings, so even when a query and the relevant document share no keywords, it finds the match.

How It Works in Production

RakanEmbed is the retrieval engine, but the full search pipeline has two steps. First, queries go through a rewriter (based on INF-X-Retriever) that distills complex, messy questions into clean search intent. Then RakanEmbed takes that clarified query and finds the right documents.

The rewriter handles the "what are you actually asking?" part. RakanEmbed handles the "where's the answer?" part. Together, they make reasoning-heavy search work reliably in production.

Performance: Sub-second embedding inference. Single forward pass, no iterative retrieval loops. The model is hosted on Modal as a serverless GPU endpoint.

The Results

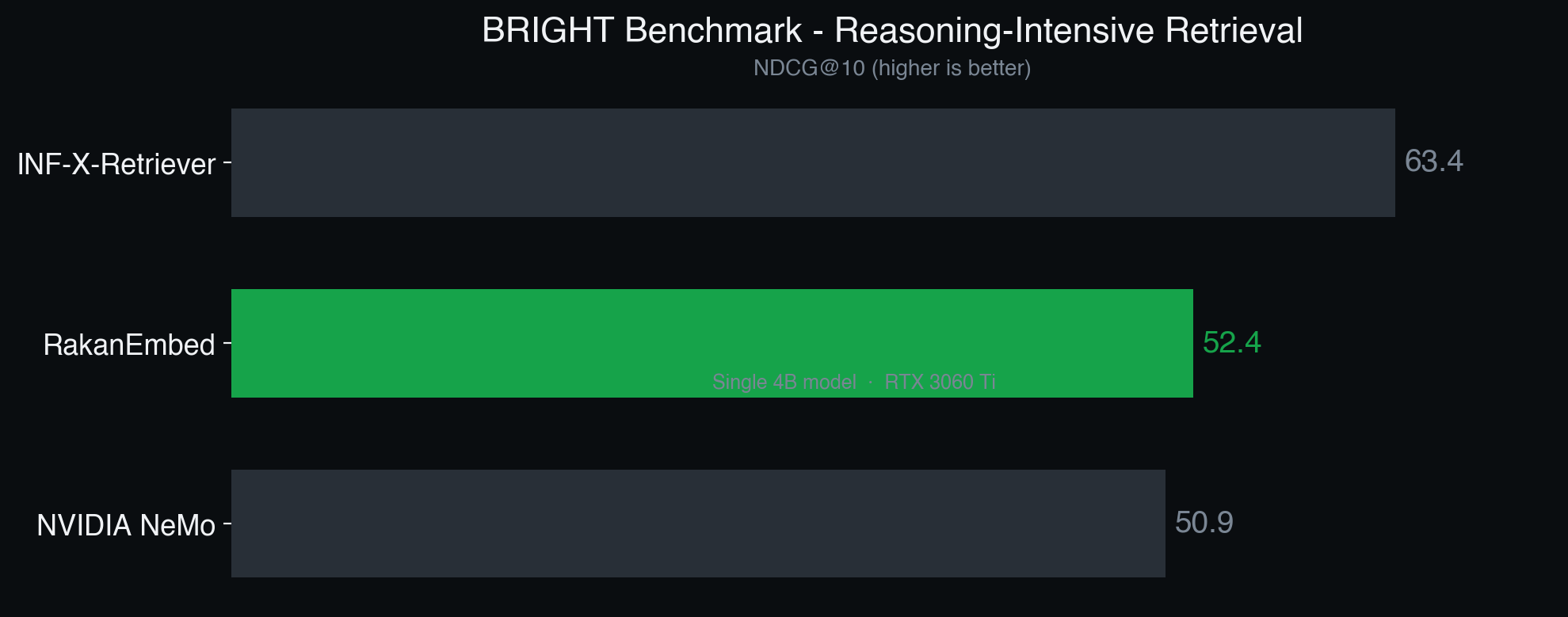

RakanEmbed was tested against 12 different domains - biology, economics, coding, math, psychology, and more - using the BRIGHT benchmark, a test designed specifically for reasoning-heavy retrieval where accurate context matters most.

RakanEmbed ranked #2 globally.

How it compares

RakanEmbed was trained on a single RTX 3060 Ti.

Standout domains

- Biology (0.66) - complex scientific question retrieval

- TheoremQA (0.64) - mathematical reasoning and proof retrieval

- Psychology (0.61) - nuanced behavioral science queries

- Economics (0.59) - linking concepts across different framings

For the full domain-by-domain breakdown, see the detailed benchmarks.

How It Was Built

The first attempts went nowhere. Off-the-shelf embedding models couldn't handle reasoning-heavy queries. Building complex pipelines using Large language models like Grok were too slow and too expensive to run as retrievers. Standard fine-tuning approaches on popular base models didn't move the needle.

Finding the right base model to fine tune from was half the battle. Most language models converted into embedding models start too low on the benchmark. Starting with a strong base model simplifies training.

From there it was iteration. Fine-tuning for reasoning-intensive retrieval using Unsloth's pipeline on a single RTX 3060 Ti. Each training run took weeks. Some approaches got shelved after days of waiting for results that didn't pan out. But each failed run narrowed down what worked.

The final model came from a combination of the right base model, the right training data, and enough patience to iterate through weeks of training.

Real-World Applications

- RAG pipelines - Right context on the first try means fewer tokens, faster responses, and less hallucination.

- Enterprise knowledge search - Employees get the right document regardless of how they phrase the question. Less time searching, more time working.

- Customer support - Accurate ticket-to-article matching means faster resolution and less escalation.

Trade-offs

Being honest about what RakanEmbed is and isn't:

- Depends on the query rewriter. RakanEmbed performs best with INF-X's query rewriting step. Without it, performance on complex reasoning queries drops. The rewriter adds a dependency but it's lightweight and open source.

- Not the #1 model. INF-X-Retriever scores 11 points higher. The gap comes from their reranking stage and larger retriever - things deliberately left out to keep the system simple and fast.

- Optimized for reasoning, not keyword search. For simple keyword-in, keyword-out retrieval, standard models work fine. RakanEmbed shines when the question requires actual understanding.

Why This Matters

This is the kind of depth that goes into every client project at RakanLabs. No off-the-shelf tools when the problem calls for something custom. Training models on consumer hardware, testing them against global benchmarks, and deploying them in production.

If your AI system needs better retrieval - or you want to test RakanEmbed on your own data - let's talk.